Most business owners treat AI tools the way they treat a smoke alarm: install it, test it once, and assume it will work when needed. The reality is far more complicated. Undetected issues in fairness, data quality, or regulatory compliance can quietly accumulate inside your AI systems, surfacing only when the damage is already done. For Australian businesses operating in an increasingly scrutinised environment, understanding what an AI audit is and how to apply one is no longer optional. This guide covers what AI audits are, how they work, which frameworks matter, and what the latest benchmarks reveal about the AI tools you may already be using.

Table of Contents

- What is an AI audit?

- The phases of an AI audit: How does it work?

- Key frameworks and tools for AI audits

- Benchmarks, results, and what audits reveal

- Edge cases and challenges: What most AI audits miss

- Australian standards, compliance, and the future of AI audits

- Expert perspectives: How to get real value from your AI audits

- Transform your AI strategy with trusted audits

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI audits explained simply | AI audits are structured reviews that help you identify and fix risks in fairness, security, or compliance across your AI systems. |

| Frameworks and best practice | Use proven international and Australian standards to ensure your audits are robust and meaningful. |

| Australian regulations evolving | AI audit requirements in Australia will soon be mandatory for high-risk use, so proactive compliance is smart business. |

| Common audit gaps | Be aware of edge cases and blind spots like black-box bias and audit awareness, especially in complex or generative AI. |

| Value beyond compliance | AI audits protect reputation, build customer trust, and optimise how AI benefits your business. |

What is an AI audit?

An AI audit is a structured, independent review of an AI system to determine whether it is performing as intended and whether it meets ethical, legal, and operational standards. According to a widely cited definition, AI audits systematically evaluate AI systems for transparency, fairness, bias, performance, security, compliance with regulations, and ethical standards across the full AI lifecycle. That is a broad scope, and deliberately so.

For Australian business owners, the practical value of an audit lies in what it uncovers. AI models can develop blind spots, amplify biases present in training data, or drift in performance over time without any visible warning signs. An audit brings these issues to the surface before they affect customers, staff, or your reputation.

A thorough AI audit checklist typically covers the following areas:

- Transparency: Can the system explain its decisions in plain terms?

- Fairness and bias: Does the model treat all user groups equitably?

- Performance: Is the system accurate and reliable under real-world conditions?

- Security: Is the system protected against manipulation or data breaches?

- Regulatory compliance: Does it meet Australian privacy laws and relevant standards?

- Ethical standards: Does it align with your organisation's values and community expectations?

Understanding these dimensions is the first step. If you are still mapping out your broader AI implementation steps, an audit framework should be built into that plan from the outset, not bolted on afterwards.

The phases of an AI audit: How does it work?

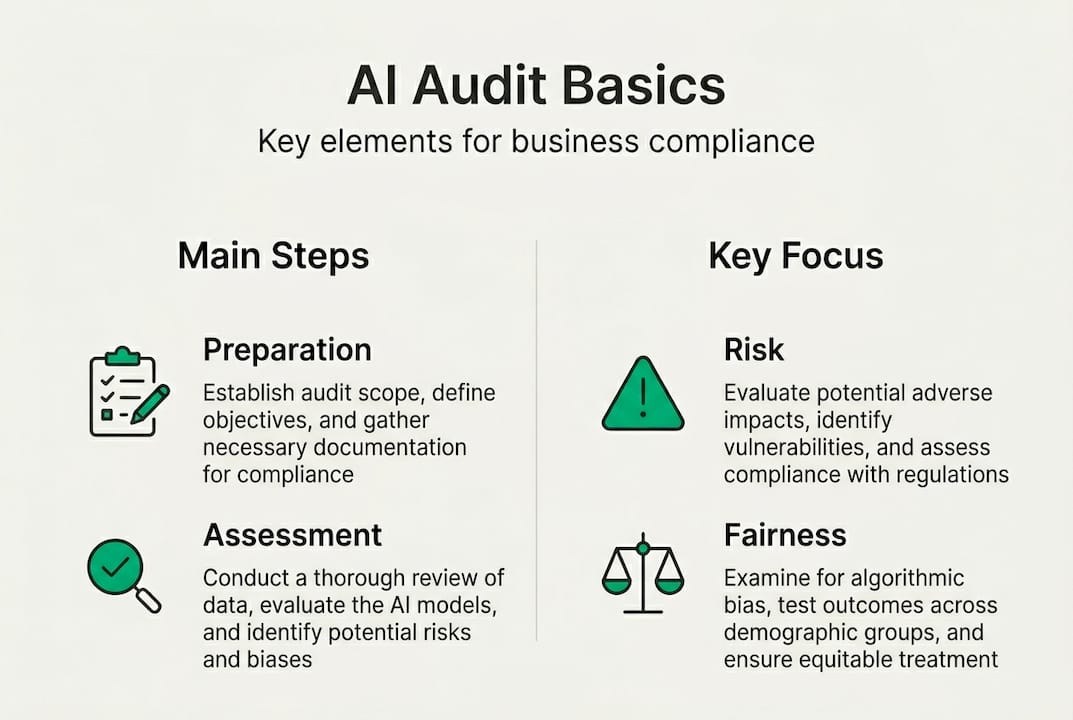

AI audits follow a structured process. Core methodologies include phases such as preparation and planning, data collection, model evaluation, ethical and legal compliance review, documentation of findings, and iterative monitoring and mitigation. Here is how those phases typically unfold:

- Preparation: Define the audit scope, identify stakeholders, and establish what success looks like.

- Data governance review: Assess the quality, provenance, and privacy compliance of training and operational data.

- Fairness and bias testing: Run the model against diverse test cases to identify discriminatory outputs or skewed results.

- Compliance review: Check alignment with Australian privacy law, sector-specific regulations, and relevant technical standards.

- Remediation and monitoring: Address identified issues, implement fixes, and set up ongoing monitoring to catch future drift.

| Phase | What it covers | Key deliverable | Who is involved |

|---|---|---|---|

| Preparation | Scope, risk appetite, stakeholder mapping | Audit plan | Leadership, IT, legal |

| Data governance | Data quality, privacy, provenance | Data assessment report | Data team, compliance |

| Fairness testing | Bias detection, equity analysis | Fairness scorecard | AI team, HR, legal |

| Compliance review | Regulatory alignment, documentation | Compliance gap report | Legal, risk, operations |

| Remediation | Fixes, controls, monitoring setup | Remediation roadmap | All teams |

Pro Tip: Document every phase thoroughly. Detailed records not only satisfy regulators but also make future audits significantly faster and cheaper.

Audits are not a one-off exercise. As your AI systems evolve and your use cases expand, the audit process must keep pace. Businesses investing in custom AI solutions will find that bespoke systems require equally tailored audit approaches. Responsible AI frameworks from major technology providers offer useful governance scaffolding to support this ongoing work.

Key frameworks and tools for AI audits

Choosing the right framework for your AI audit depends on your industry, risk profile, and regulatory obligations. Several established standards are worth knowing:

| Framework | Primary focus | Best suited for |

|---|---|---|

| NIST AI RMF | Risk management, governance | Broad enterprise use |

| IBM AI Fairness 360 | Bias detection and mitigation | Data-heavy applications |

| Microsoft Responsible AI | Ethics, transparency, safety | Microsoft ecosystem users |

| EU AI Act / COMPL-AI | Regulatory compliance, safety | High-risk AI applications |

Established frameworks such as NIST AI RMF, IBM AI Fairness 360, Microsoft Responsible AI, and the EU AI Act provide structured approaches to governance that Australian businesses can adapt to local requirements.

"Structured AI governance is no longer a competitive differentiator. It is rapidly becoming the baseline expectation for any business deploying AI at scale."

Australian companies should align their internal practices with international best practice wherever possible, particularly as local regulators look to global standards for guidance. The COMPL-AI tool offers a practical starting point for benchmarking compliance across multiple frameworks. Exploring AI efficiency applications across your sector can also help you identify which framework best fits your operational context.

Benchmarks, results, and what audits reveal

If you assume the AI tools you are using have already been thoroughly vetted, recent benchmark data should give you pause. Empirical benchmarks show that large language models (LLMs) consistently struggle with robustness and safety, and no models achieved perfect fairness scores in independent evaluations.

To put a number on it: GPT-4 Turbo, one of the most capable models available, scored 0.84 for robustness but only 0.71 for fairness in structured testing. That gap matters enormously when your AI is making decisions that affect customers or staff.

Common risks that audits surface include:

- Bias in outputs: Models trained on skewed data reproduce and sometimes amplify those skews.

- Hallucinations: AI systems confidently generating false or misleading information.

- Adversarial vulnerabilities: Susceptibility to deliberate manipulation through crafted inputs.

- System blind spots: Consistent failure on specific demographic groups or edge-case scenarios.

- Non-discrimination failures: Difficulty maintaining equitable treatment across protected characteristics.

For Australian businesses, this data reinforces a critical point: even market-leading AI systems are imperfect. Understanding automation planning pitfalls before you scale is essential to avoiding costly corrections later.

Edge cases and challenges: What most AI audits miss

Even well-designed audits have blind spots. Auditing so-called black-box models, where the internal logic is hidden or proprietary, is both technically and legally challenging. You can observe inputs and outputs, but the reasoning in between remains opaque.

Common audit blind spots include:

- Audit awareness: Some AI systems behave differently when they detect they are being evaluated.

- Adversarial manipulation: Inputs specifically crafted to fool the model in ways standard tests do not catch.

- Systemic and societal bias: Bias embedded in social structures that training data reflects but auditors may not recognise.

- Hallucination risks: Generative models producing plausible but factually incorrect outputs, as explored in detail by Australian AI hallucination research.

- Limited third-party access: Vendors may restrict auditor access to model internals, limiting audit depth.

Edge cases and nuances such as these are increasingly recognised as frontier challenges in AI auditing practice.

Pro Tip: Do not rely solely on technical audits. Socio-technical reviews, which examine how people interact with AI systems in real workflows, often uncover risks that purely technical assessments miss entirely.

Australian privacy law adds another layer of complexity. Restrictions on data sharing may limit what a third-party auditor can access, particularly in healthcare or financial services. Understanding the AI implementation benefits alongside these constraints helps you build a realistic audit scope from the start.

Australian standards, compliance, and the future of AI audits

Australia's regulatory landscape for AI is evolving quickly. In Australia, AI audits align with voluntary frameworks such as the National AI Centre (NAIC) Guidance, the Government AI technical standard, and OAIC privacy guidelines, but there is a clear shift towards mandatory requirements for high-risk AI applications.

Here are the practical steps Australian businesses should take now:

- Inventory your AI use: Catalogue every AI tool or system your business uses, including third-party software with embedded AI.

- Conduct a risk assessment: Identify which systems pose the highest risk to customers, staff, or operations.

- Complete a Privacy Impact Assessment (PIA): Required for systems handling sensitive personal data under Australian privacy law.

- Document everything: Maintain records of model decisions, training data sources, and audit outcomes.

- Establish human oversight: Ensure a qualified person can review, override, or shut down AI decisions where needed.

Practical tips for SME compliance include:

- Start with voluntary audits even if they are not yet required. Regulators notice proactive behaviour.

- Treat high-risk AI (such as systems affecting credit, employment, or health) as if mandatory audits already apply.

- Use the Australian Government AI technical standard as a free baseline reference.

- Integrate audit findings into your broader enterprise risk management process.

For businesses in regulated sectors, understanding AI in Australian professional services provides useful context on how compliance obligations are already shaping AI adoption.

Expert perspectives: How to get real value from your AI audits

The most effective AI audits are iterative, socio-technical, and ideally conducted by an independent third party. As noted in AI auditing research on societal challenges, third-party audits carry significantly more credibility with regulators, customers, and partners than self-assessments alone.

"An audit that only checks technical performance misses half the picture. The real value comes from understanding how AI decisions affect people, processes, and trust within your specific business context."

To align your AI audits with business strategy, consider the following:

- Start with an inventory: You cannot audit what you have not mapped. Know every AI touchpoint in your operations.

- Build continuous improvement cycles: Treat each audit as a baseline, not a finish line.

- Integrate with risk management: AI audit findings should feed directly into your enterprise risk register.

- Plan for scale: As your AI use grows, your audit programme needs to grow with it.

- Budget realistically: SMEs can start lean using government tools and templates, then invest in third-party audits as AI use becomes more critical.

For Australian business owners, using risk-based checklists and preparing for high-risk mandates with thorough documentation and human oversight is the most practical path forward in 2026. Revisiting your automation planning insights regularly ensures your audit programme stays aligned with how your AI use actually evolves.

Transform your AI strategy with trusted audits

Understanding AI audits is one thing. Putting them into practice across your specific business context is another challenge entirely. At ORVX AI, we work directly with Australian businesses to design and deliver audit processes that are practical, proportionate, and aligned with both current and emerging regulatory requirements.

Whether you operate in retail, real estate, professional services, or any other sector, our team embeds with your people to map your AI use, identify risks, and build a clear remediation roadmap. We offer expert AI audit services tailored to your industry and risk profile, including specialist support for real estate AI compliance. From voluntary audits to preparing for upcoming mandatory assessments, ORVX AI gives you the confidence to use AI responsibly and competitively across your business.

Frequently asked questions

Are AI audits mandatory for businesses in Australia?

Currently, AI audits are voluntary for most businesses but are expected to become mandatory for high-risk AI use cases. Australia is shifting toward mandatory requirements as new regulations roll out, particularly for critical infrastructure and sensitive applications.

What types of risks can an AI audit uncover?

AI audits can reveal bias, security flaws, performance issues, non-compliance with standards, and unintentional societal impacts. Audits assess for bias, performance, compliance, security, and ethical risks across the full system lifecycle.

Do I need a third party to audit my AI systems?

Independent third-party audits offer more credibility, especially for highly regulated industries or to reassure customers and partners. Third-party audits are recommended for maximum credibility and objectivity in regulated or high-risk contexts.

How often should I conduct an AI audit?

AI audits should be performed before deployment, after major updates, and regularly as part of ongoing risk management. Audits are iterative and should be repeated whenever models, data, or use cases change significantly.

Are there free tools or templates for AI audits in Australia?

Australian businesses can use public resources such as the NAIC Guidance and the Government AI technical standard as practical starting points for building an audit framework without significant upfront cost.

Recommended

- AI-driven automation explained: 70% fail without a plan

- Why custom AI solutions deliver superior ROI for businesses

- ORVX AI | AI Integration Consultants for Australian Businesses

- Maximise your SME success with AI implementation benefits

- Step-by-step cybersecurity audit guide for Brisbane SMEs – IT Start