TL;DR:

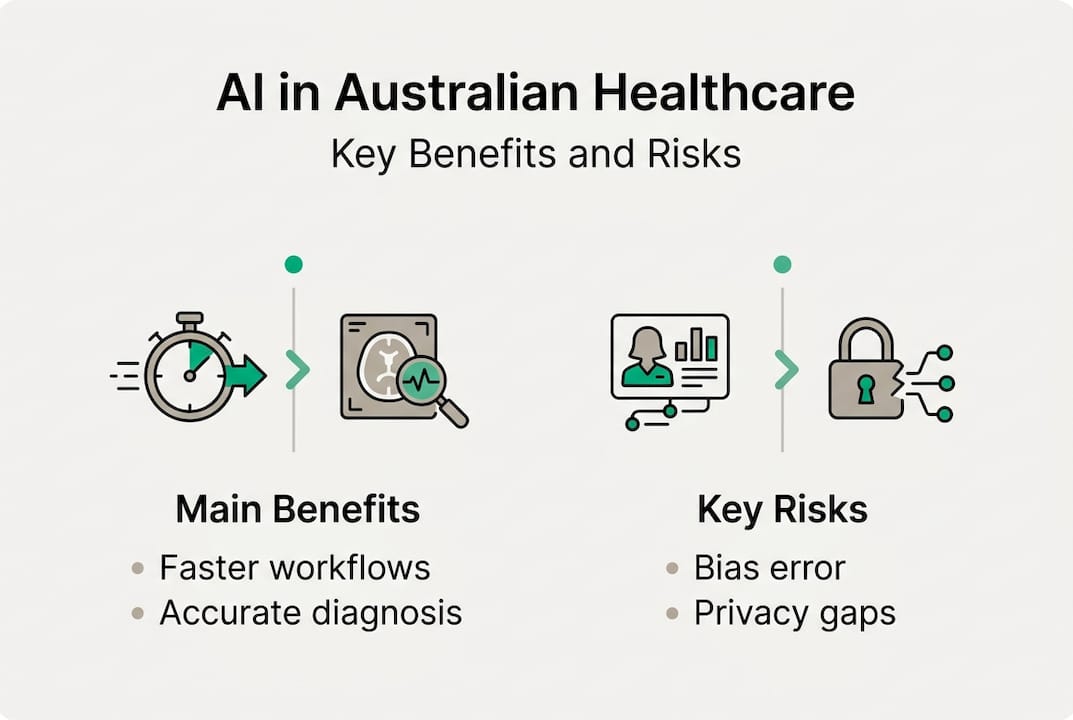

- AI tools are actively improving Australian healthcare by automating documentation, diagnostics, and billing. These implementations have significantly saved clinician time and increased accuracy. Risks such as bias, transparency issues, and regulatory compliance are critical considerations for safe AI adoption.

Australian hospitals and clinics are no longer waiting for AI to arrive. It's already here, and the numbers are striking. AI tools are releasing 59 minutes per day per clinician while cutting overtime by 87% across participating sites. For healthcare decision-makers navigating workforce pressures, rising patient volumes, and tightening budgets, that kind of impact is impossible to ignore. This guide walks through the real applications already running in Australian settings, the proven benefits backed by local data, the risks you must plan for, and the regulatory landscape you need to understand before scaling any AI initiative.

Table of Contents

- How AI is being used in Australian healthcare today

- Core benefits of AI: From efficiency to better care

- Major risks: Bias, safety, and trust in clinical AI

- Navigating the regulatory landscape: Compliance for AI in Australian healthcare

- Our take: How decision-makers can adopt AI in healthcare, what most miss

- Accelerate safe AI adoption in your hospital or clinic

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| AI is already in use | Australian hospitals use AI for documentation, diagnostics and efficiency, not just future plans. |

| Substantial benefits | AI saves clinicians time and boosts diagnostic accuracy, with strong data supporting efficiency gains. |

| Risks require vigilance | Bias, transparency gaps and accountability concerns mean careful governance is essential. |

| Compliance is evolving | AI tools must meet TGA regulations and evidence standards; leaders should watch for new Australian frameworks. |

| Start small, build trust | Begin with low-risk applications, keep humans involved and audit continuously for ethical adoption. |

How AI is being used in Australian healthcare today

Forget the science fiction version of AI in hospitals. What's actually deployed right now is far more practical, and far more impactful. AI methodologies now support imaging diagnostics, electronic health record (EHR) analysis, clinical scribing, and predictive analytics across multiple Australian health networks.

Ramsay Health Care has trialled AI scribing tools that listen to clinical consultations and generate structured notes automatically, freeing clinicians from hours of administrative burden each week. AI-driven billing systems have saved hospitals millions of dollars by identifying coding errors and claim discrepancies that would otherwise slip through manual review. These aren't pilots on a whiteboard. They're running in real wards and real clinics right now.

75% of healthcare providers are actively experimenting with AI, and government investment in the sector continues to rise. The range of tools being tested spans several key domains:

- Imaging diagnostics: AI models detect polyps, retinal disease, and cardiovascular markers from scans with accuracy rates that rival senior specialists.

- Predictive bed management: Neural networks forecast patient admissions and discharge patterns, helping hospitals allocate resources before bottlenecks occur.

- Clinical documentation: AI scribes capture consultation details in real time, reducing the after-hours documentation burden that burns out clinicians.

- Billing and coding: Automated systems cross-check billing codes against clinical records, dramatically reducing claim errors.

- Patient journey prediction: Gradient Boosted Tree models and similar approaches outperform traditional statistical tools in predicting readmission risk and deterioration.

Here's a snapshot of how AI compares to traditional approaches across common healthcare tasks:

| Task | Traditional approach | AI-assisted approach |

|---|---|---|

| Imaging review | Manual specialist review | Automated flagging with specialist confirmation |

| Documentation | Clinician typed or dictated | Real-time AI scribing |

| Bed management | Historical averages | Predictive modelling |

| Billing accuracy | Manual coding audits | Automated claim validation |

| Deterioration alerts | Nurse observation | Continuous data monitoring |

"The most impactful AI tools in healthcare today aren't replacing clinicians. They're giving clinicians their time back so they can focus on patients."

For a broader view of how these tools fit together, exploring AI in healthcare applications offers a useful starting point for decision-makers mapping their own priorities.

After clarifying how present AI initiatives are shaping the sector, let's detail the operational and clinical benefits backed by the latest Australian data.

Core benefits of AI: From efficiency to better care

The case for AI in Australian healthcare isn't built on theory. It's built on measurable outcomes already recorded across local health systems.

On the operational side, the gains are substantial. AI scribing and documentation tools have released 59 minutes daily per clinician, achieved 97% accuracy in automated billing, and earned 97% staff satisfaction with AI-generated documentation quality. That's not a marginal improvement. It's a fundamental shift in how clinicians spend their working day.

On the clinical side, the results are just as compelling. AI-powered imaging has achieved 93.9% accuracy in cardiovascular disease risk detection, while Australia has committed $30 million in targeted AI investment to strengthen these capabilities further. Retinal screening programs using AI are identifying diabetic eye disease earlier than traditional pathways, and polyp detection tools are improving colonoscopy outcomes by catching lesions that human reviewers can miss.

Here's a summary of the most significant benefits already demonstrated in Australian settings:

| Benefit area | Measured outcome |

|---|---|

| Clinician time saved | 59 minutes per day per clinician |

| Billing accuracy | 97% automated claim accuracy |

| Staff satisfaction with documentation | 97% reported satisfaction |

| CVD risk detection via imaging | 93.9% accuracy |

| Government AI investment | $30 million committed |

The pathway to achieving these outcomes typically follows a practical sequence:

- Start with documentation automation to deliver immediate time savings with minimal clinical risk.

- Introduce AI billing and coding tools to reduce revenue leakage and administrative overhead.

- Pilot predictive bed management to improve scheduling and reduce emergency bottlenecks.

- Expand into diagnostic support tools with appropriate clinical validation and governance in place.

- Build towards personalised care planning using AI-generated patient risk profiles and treatment recommendations.

Pro Tip: Don't underestimate the cultural dimension. Clinicians who are involved in selecting and testing AI tools are far more likely to trust and use them effectively. Engagement early in the process pays dividends long after go-live.

For healthcare teams focused on the patient-facing dimension, reviewing how AI-driven patient experience approaches are shaping care delivery can offer practical framing for internal conversations with clinical leads.

With these proven benefits in mind, let's consider what decision-makers need to watch for before wide-scale adoption.

Major risks: Bias, safety, and trust in clinical AI

The benefits are real, but so are the risks. Healthcare AI that performs brilliantly in trials can still fail in practice if the underlying risks aren't addressed head-on.

Bias is the most serious and most underappreciated concern. 66.7% of clinicians have directly observed AI producing misleading results for minority patient groups or atypical presentations. When training data skews towards particular demographics, the model learns patterns that don't generalise across the full patient population. In high-stakes clinical decisions, that gap can cause real harm.

Transparency is the second major barrier. Many AI models operate as "black boxes" where the reasoning behind a recommendation is hidden from the clinician. This erodes trust and creates accountability grey zones. If an AI recommendation contributes to an adverse outcome, it's currently unclear in many cases where legal and professional responsibility sits.

Key risks every decision-maker must plan for:

- Algorithmic bias: Models trained on narrow datasets produce unreliable results for diverse patient groups.

- Lack of explainability: Clinicians can't interrogate a model's reasoning, making it harder to override confidently.

- Data privacy and storage: Over half of Australian health stakeholders want local data storage requirements to protect patient information.

- Accountability gaps: No clear legal framework currently assigns responsibility when AI contributes to a clinical error.

- Integration risks: Poorly integrated AI tools can disrupt existing workflows and introduce new points of failure.

"An AI system that clinicians don't trust won't be used, regardless of how accurate it tests in isolation."

Pro Tip: Build a human-in-the-loop process from day one. Every AI recommendation in a clinical setting should require clinician confirmation before action is taken. This isn't just best practice. It's the foundation of trustworthy AI deployment.

Thorough AI audit strategies are essential for identifying bias and performance drift before problems escalate. The experience of professional services and AI adoption offers useful parallels for healthcare teams designing governance frameworks.

Given these critical risks, regulatory and governance frameworks are becoming essential for safe, effective AI integration.

Navigating the regulatory landscape: Compliance for AI in Australian healthcare

Australia has a clearer regulatory framework for healthcare AI than many decision-makers realise. Understanding it is not optional. It's a prerequisite for any responsible deployment.

The Therapeutic Goods Administration (TGA) regulates AI clinical tools as software medical devices (SaMD), subject to strict classification, registration, and post-market surveillance requirements. The recent national review found no major regulatory gaps, but it strongly urged greater evidence generation and clearer national leadership to keep pace with the speed of AI development.

International frameworks, particularly NICE guidance from the UK, offer robust evaluation templates that Australian health organisations can adapt for local compliance and evidence reporting. These frameworks prioritise pragmatic trials, real-world validation, and transparent reporting, exactly the kind of evidence TGA and funders will expect.

Key compliance priorities for Australian healthcare decision-makers:

- Confirm whether your AI tool is classified as a SaMD under TGA rules and complete registration if required.

- Establish a post-market monitoring plan to detect performance drift and adverse events after deployment.

- Document the evidence base for any AI tool entering clinical workflows, including training data sources and validation results.

- Align data storage and privacy practices with Australian Privacy Act requirements and any state-specific health data laws.

- Engage your clinical governance committee early, before procurement, not after.

A practical sequence for regulatory compliance:

- Classify the AI tool (clinical versus operational) to determine TGA obligations.

- Complete a data privacy impact assessment before connecting to patient records.

- Run a supervised pilot with documented outcomes before full deployment.

- Establish ongoing performance monitoring with defined review triggers.

- Train clinical staff on the tool's limitations and the escalation process for anomalies.

For teams at the planning stage, reviewing implementation steps for AI compliance and a step-by-step implementation framework offers a practical roadmap to get from intent to compliant deployment.

Armed with this regulatory clarity, senior leaders can approach pilot projects and scaling with genuine confidence.

Our take: How decision-makers can adopt AI in healthcare, what most miss

Here's what we observe consistently across healthcare organisations exploring AI: the instinct is to begin with the most exciting clinical tools. Diagnostic AI. Predictive imaging. Treatment recommendation engines. These are compelling, but they also carry the highest regulatory burden, the greatest clinical risk, and the longest validation timelines.

The organisations making the fastest and safest progress start with operational AI. Documentation automation, staff rostering, billing validation. These tools deliver measurable results quickly while building the internal capability, governance muscle, and staff trust needed to tackle more complex clinical applications later.

A phased approach isn't timidity. It's strategy. Each phase builds the data governance infrastructure, the audit culture, and the frontline buy-in that makes the next phase safer and more effective. Inclusive data sets and genuine clinician co-design aren't optional extras. They're what separates AI that actually gets used from AI that collects dust.

If you're ready to map out what that phased approach looks like for your organisation, building an AI strategy is a practical place to start.

Accelerate safe AI adoption in your hospital or clinic

Knowing the landscape is one thing. Navigating it with confidence is another. ORVX AI works directly with Australian healthcare organisations to design and deploy AI solutions that are compliant, practical, and built around your specific workflows, not generic templates.

Whether you're exploring your first documentation automation tool or planning a broader health industry AI solutions rollout, our team embeds with yours to understand your environment, identify the right opportunities, and manage implementation from audit to go-live. As trusted AI integration consultants with a local presence and vendor-agnostic approach, we help you move from intention to measurable outcomes. Reach out to start a tailored strategy conversation for your hospital or clinic, and explore AI for healthcare solutions designed for the Australian context.

Frequently asked questions

What are the main uses of AI in Australian healthcare right now?

AI applications span automated clinical scribing, imaging diagnostics, billing automation, and predictive patient care analytics. These are active deployments across Australian hospitals and clinics, not future plans.

What are the biggest risks of using AI in clinical settings?

Bias, lack of transparency, unclear accountability, and data privacy vulnerabilities are the most pressing risks. Each requires active governance, not passive monitoring.

How is AI in healthcare regulated in Australia?

The TGA classifies clinical AI as SaMD, requiring registration, validation, and ongoing surveillance. International frameworks like NICE guidance support evidence generation and evaluation best practice.

How can hospitals safely start using AI?

Begin with low-risk operational tools like documentation automation, maintain human oversight at every decision point, and build audit and governance processes before expanding to clinical applications.